Top 5 Ways to Make a Video Unique for Social Media in 2026

Whether you are running a compilation channel, a meme page, a news aggregator, or cross-posting your own content, making a video truly unique is the only way to avoid detection in 2026.

Platforms have invested billions in fingerprinting technology, and the bar for what counts as "unique" keeps rising every year.

We ranked the five most common methods from the most effective to the least. The results may surprise you.

1. Re-Shooting the Content (100% Effective)

The most effective way to make a video unique is to re-create it from scratch. If you film the same scene yourself, with your own camera, at your own location, the result is a completely original video with zero fingerprint overlap. No detection system can match it against the original because it is not the same video at all.

Why it works: Every aspect of the video is different: the camera sensor noise, the lighting conditions, the exact framing, the audio environment, the metadata. There is nothing for detection algorithms to match against.

Why it is impractical: For the vast majority of use cases, re-shooting is impossible. You cannot re-shoot a viral moment, a news clip, a movie scene, or someone else's content. Even for your own content, re-filming a video just to post it on a different platform is absurdly time-consuming.

Best for: Original content creators who can plan multiple variations during the initial shoot. If you are filming content specifically for cross-posting, shooting slightly different versions for each platform during production is the gold standard. But this only works for planned content, not for reposting existing content.

2. Adversarial AI Modification with MetaGhost (~99% Effective)

Adversarial AI modification uses neural networks to generate invisible perturbations on each frame of a video. These perturbations change the mathematical fingerprint that platforms compute without altering the visual content that humans see. The video looks and sounds identical to the original, but every detection system treats it as new, original content.

Why it works: Adversarial perturbation operates at the same level as the detection algorithms. It targets the exact features that fingerprinting models analyze: deep learned representations, perceptual hashes, and spectral audio patterns. By modifying these features directly, it defeats all three layers of detection simultaneously.

How it compares to re-shooting: The only reason adversarial AI is not rated 100% is that no automated system can guarantee absolute perfection across every possible detection system in every possible scenario. In practice, MetaGhost achieves bypass rates above 99% on every major platform including YouTube, Instagram, TikTok, Facebook, Snapchat, and X/Twitter.

What makes it unique among these methods: Unlike every other technique on this list, adversarial AI does not degrade the video quality. There is no visible change, no audio distortion, no loss of resolution. The output is perceptually identical to the input, which is why it is the only method that combines high effectiveness with zero quality compromise.

Best for: Anyone who needs to repost or cross-post video content at any scale. Compilation channels, reaction channels, meme pages, marketing teams, content aggregators, and individual creators who want to use the same video across multiple platforms.

3. Heavy Re-Editing (60-70% Effective)

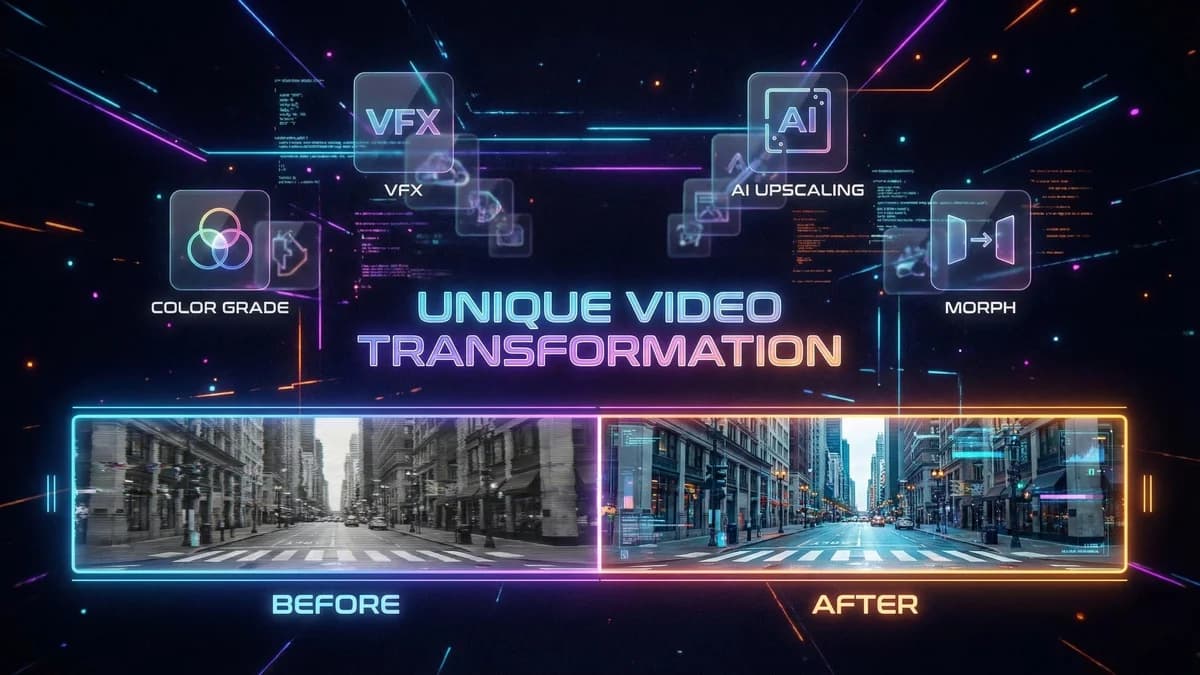

Heavy re-editing means substantially transforming the video in a video editor: cutting and rearranging scenes, adding new transitions, overlaying new graphics, changing the aspect ratio, adding a new intro and outro, and applying significant color grading. This is not a simple crop or filter but a genuine creative reworking of the source material.

Why it partially works: When you rearrange scenes, change the sequence of frames, and add significant new visual elements, you break the temporal fingerprint that video detection relies on. The more you change the structure of the video, the harder it is for detection systems to match it against the original.

Why it is only 60-70% effective: Modern detection systems analyze individual segments independently. Even if you rearrange 10 clips, each individual clip is still matched against the database. YouTube's Content ID, for example, can identify a 10-second segment within a 30-minute video (see our guide on how to repost on YouTube without copyright strikes). Additionally, audio fingerprinting will catch any unmodified audio regardless of video edits.

The time problem: Heavy re-editing takes significant time and skill. Depending on the video length, you might spend 30 minutes to several hours reworking a single video. For anyone processing more than a few videos per week, this is not scalable.

Best for: Creators who want to add genuine creative value to existing content and do not mind the time investment. If you are making a commentary or analysis video that happens to include source material, heavy editing is a natural part of the creative process.

4. Re-Encoding with Different Codec (30% Effective)

Re-encoding means exporting the video with different compression settings: changing from H.264 to H.265/HEVC, adjusting the bitrate, modifying the frame rate, or changing the container format from MP4 to MKV. Some people also change the resolution (e.g., from 1080p to 720p and back to 1080p).

Why it occasionally works: Re-encoding introduces compression artifacts that are slightly different from the original. At very aggressive compression levels (low bitrate), enough visual information is destroyed that the perceptual hash may change. The new file also has different container metadata.

Why it mostly fails: Detection systems analyze the decoded visual frames, not the encoding format. Whether your video is H.264 or H.265, the frames look virtually identical when decoded. The only scenario where re-encoding helps is when you drastically reduce quality, which makes the video look terrible and defeats the purpose of sharing it.

The audio problem: Even if re-encoding the video component slightly changes the visual fingerprint, audio fingerprinting is separate. Re-encoding audio at a different bitrate rarely changes the spectral fingerprint enough to avoid a match. You would need to re-encode audio at extremely low quality (64kbps or lower) to have any effect, which makes it sound awful.

Best for: Almost no one. The only use case is if you are uploading to a platform with extremely basic detection that only checks file hashes, not perceptual hashes. Such platforms barely exist in 2026.

5. Simple Filters and Crops (10% Effective)

This category includes all the quick fixes people try first: applying a color filter, cropping the video slightly, adding a border or frame, mirroring/flipping the video, adding a small speed change (1.05x), overlaying a watermark, or adding background music.

Why it almost never works: Every single one of these modifications is something that detection systems are specifically designed to handle. Perceptual hashing survives crops, filters, and mirrors by design. AI models are trained on augmented datasets that include all of these variations. Audio fingerprinting normalizes for speed and pitch changes.

The compound problem: Even combining multiple simple edits (crop + filter + mirror + speed change) rarely crosses the threshold needed to defeat modern detection. You might change the perceptual hash by 10-15%, but platforms typically flag matches at 75-85% similarity. You would need to change the hash by 25% or more, which requires modifications so aggressive that the video quality suffers noticeably.

The false confidence problem: The most dangerous aspect of simple edits is that they sometimes work once and create false confidence. You crop a video and it goes through on TikTok. So you do it again, and again, and suddenly your account is shadowbanned because the platform flagged the pattern retroactively. A technique that works intermittently is worse than one that never works, because it encourages you to build a strategy on an unreliable foundation.

Best for: No serious use case. If you are reposting content at any meaningful scale, simple edits will get your account flagged eventually.

The Clear Winner

Among practical, scalable approaches, adversarial AI modification is the clear winner. For a broader look at both image and video solutions, see our comparison of the best tools to make your content unique. Re-shooting is technically more effective but impractical for reposting. Heavy re-editing works partially but does not scale. Re-encoding and simple edits are largely ineffective against modern detection.

Adversarial AI is the only approach that is:

- Highly effective (~99% bypass rate across all major platforms)

- Quality-preserving (no visible or audible changes)

- Scalable (automated processing, no manual work)

- Consistent (works every time, not intermittently)

- Future-proof (targets the detection models directly, adapting as they evolve)

Get Started

Ready to make your videos truly unique? Sign up for MetaGhost and process your videos with adversarial AI. One upload, automatic processing, and a unique output that bypasses every platform's detection. No manual editing required.

Ready to protect your content?

Try MetaGhost and make every repost unique and undetectable.

Discover MetaGhostRelated Articles

How Platforms Detect AI-Generated Content in 2026

Platforms flag AI content through C2PA metadata, IPTC tags, and EXIF markers. Learn what triggers the Made with AI label and how to remove it from your photos and videos.

Best Link-in-Bio Tools for Creators: 6 Tested (2026)

Linktree vs Beacons vs Taplink vs LinkScale — compared on conversion, link protection, analytics, and pricing. Find the right tool for your creator business.

Why Your Link-in-Bio Is Not Converting (And How to Fix It)

The hidden reasons your bio link traffic is not turning into revenue: in-app browser issues, link shadowbans, redirect chains, and how to fix each one.